SQL Server Update Statistics Best Practices

Update Statistics in SQL Server is very important maintenance activity to keep database’s statistics up to date. This helps SQL Server query optimizer to generate optimum query plan and improve database performance as well. Here, i will explain the best practices to run SQL Server update statistics on very large tables or databases.

We should be very careful while running any of the database maintenance activities on production databases. It becomes more crucial if your database size is very very big or some critical business application is using this database. Yesterday i had similar experience in one of our SAP system. Application team had reported performance issue while accessing one of the SAP system. DBAs were involved and identified a long running query that was causing the performance issue. This long running query was executing update statistics SQL Server operation on one of the biggest table in the database. This activity took very long time around 12 hours and never completed. Finally team has killed this transaction as advised by SAP support to improve the performance.

Understanding Update Statistics in SQL Server

SQL Server uses statistics to generate fastest query plan for any transaction so we should ensure all statistics must be up to date to get better query performance. As per MSDN “Updating statistics ensures that queries compile with up-to-date statistics. However, updating statistics causes queries to recompile. We recommend not updating statistics too frequently because there is a performance tradeoff between improving query plans and the time it takes to recompile queries. “

Here, first i will explain one of my issue as case study and then will give you SQL update statistics best practices. There are multiple things that can lead to longer duration of this execution, so first we should understand the issue before reaching on any conclusion.

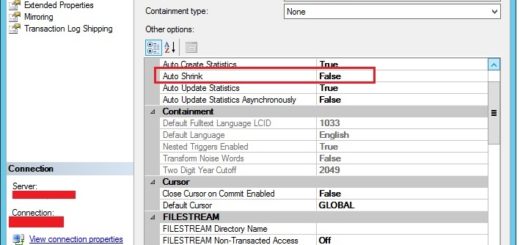

After looking into SQL Server transactions, we found that SQL Server update statistics was being executed with FULLSCAN option. This was a potential reason behind taking it for longer duration. Avoid running update statistics with FULLSCAN option while running it on very big tables or databases because it scans each and every record in the table that will degrade the system performance and took more time to complete. Although, you should always rethink before making a decision to run update statistics on your database if auto update statistics has been set to ON. As it was SAP ECC database so we checked the SAP documentation as well and SAP also suggests to not run it regularly if auto update statistics is set to ON. This is SQL Server auto update statistics best practices for very large databases.

In some cases, if you still have to run the update stats then always Use it without mentioning FULLSCAN parameter or use sp_updatestats stored procedure. If you still need to run WITH FULLSCAN then do not run the UPDATE STATISTICS SQL Server command against the entire table. Identify the column or index statistic that needs the special treatment, and only update that one with FULLSCAN.

Sometimes, SQL Server update statistics command may take very long time even after following above measures. This could be because of the bug reported in attached link. This has been fixed in below CU updates. Check your SQL Server version and apply them to fix this issue if applicable.

- Cumulative Update 2 for SQL Server 2019

- Cumulative Update 18 for SQL Server 2017

- Cumulative Update 11 for SQL Server 2016 SP2

SQL Server Update Statistics Best Practices

Below is the list of best practices i have mentioned to be considered while running update statistics in SQL Server. Have a look at them.

- Running Update Statistics on very big tables or database can increase huge IO pressure on your disk subsystem. SQL Server has very good feature to keep running it in real time by setting value auto update statistics to ON. If auto update statistics is set to ON then it is strongly recommended to not run it regularly on any databases. We can run it only on those tables where this setting is set to OFF.

- In some cases, if you still have to run the update stats then always Use it without mentioning FULLSCAN parameter or use sp_updatestats stored procedure. If you still need to run WITH FULLSCAN then Do not run the UPDATE STATISTICS command against the entire table. Identify the column or index statistic that needs the special treatment, and only update that one with FULLSCAN.

- Always run such database maintenance activities during maintenance windows. It is not recommended to run during business hours.

- If you are facing performance issue during update stats execution then you should check the possibilities of potential bug reported in attached link.

- Never run Adhoc and manual Update stats on any of the very big tables if Auto Update Statistics is set to ON because Update stats is very costly operations in terms of heavy IO activities. It does acquire schema-stability locks so it can potentially be blocked by a DDL operation (like ALTER TABLE).

- One way to find out whether you should run update stats or not is to check if your statistics are stale in the execution plans or not. If estimated rows greatly differ from your actual rows returned in execution plan then that is a good indication of potential update stats candidate.

- Ask Migration team (In case recently migrated) whether they have executed rebuild index or update stats after migrating data to SQL Server as part of post upgrade activity? Ideally it is recommended to run update stats after massive data load because auto update stats does not automatically update all records.

- If you’ve got tables with millions of rows, and you’ve found that statistics aren’t updating fast enough, you can consider:

- Turning on Trace Flag 2371 to make auto-update statistics run more frequently on tables with large amounts of rows.

- Using a third party script to handle statistics maintenance along with your index maintenance

- One way to find out if your statistics are stale is to look at the execution plans and if you estimated rows greatly differ from your actual rows returned then that is a good indication that the interval needs to be upped. If you want to do update Statistics manually you should first know When Statistics are updated automatically.

- If the SQL Server query optimizer requires statistics for a particular column in a table that has undergone substantial update activity since the last time the statistics were created or updated, SQL Server automatically updates the statistics by sampling the column values (by using auto update statistics).

Update Statistics on AlwaysON Secondary Replica

Temporary statistics are an improvement added in SQL Server 2012 for read-only databases. When a database is read-only, queries can’t create statistics in the database– because those require writes. As of SQL Server 2012, temporary statistics can be created in tempdb to help optimization of queries.

This is incredibly useful for:

- Readable secondaries in an AlwaysOn Availability Group

- Readable logshipping secondary databases

- A point in time database snapshot which is queried (whether against a database mirror or live database)

- Any other read-only database that has queries run against it

Prior to SQL Server 2012, if you use logshipping for reporting and the same workload does not run against the log shipping publisher, consider manually creating column level statistics. (Or upgrading SQL Server.)

Read More:

- Understanding Database Autogrowth in SQL Server

- Understanding Database Shrink Operation

- SQL Server AlwaysON Interview Questions & Answers

- How to Fix SQL Error 1005: A Comprehensive Guide - April 9, 2023

- How to Fix SQL Server Error 207 – Invalid Column Name - April 9, 2023

- How to Fix SQL Error 1045: Resolving Access Denied Issues - April 8, 2023

1 Response

[…] + Read More […]